As reported on Retraction Watch today, folks interested in the field of publications and retractions are “geeking out” at a new study from authors at Thomson Reuters, who mined the ISI Web of Science database to learn some new things.

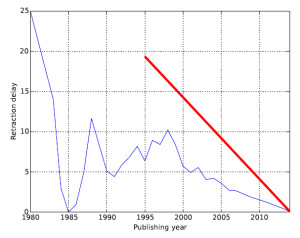

Among their key conclusions, based on Figure 1c of the paper, is a downward trend in the time delay between publication and retraction….

The problem is, such a conclusion is a self-fulfilling prophecy. The study was based on a 2014 version of the database, so for example there cannot possibly be any papers published in 2012 that would have more than a 2 year delay in retraction, because it hasn’t been long enough yet! Here’s another way to look at the data, with the red line indicating the maximum possible retraction delay for a paper published in that year….

Are we to believe that in the coming years, papers from 2005 onward which take 10 years to retract, will simply not arise? No, of course those papers are out there, and over time they will of course raise the average delay to retraction, of their year’s cohort of papers.

TL/DR – it’s too early to conclude a downward trend.

——————–

But, what could be done differently? Well, they could have taken the average delay for the whole data set (it looks to be about 7-8 years) and just set that as the cut-off, ignoring any data between 2006 and 2014. In my estimation, doing so would have nullified the conclusion of a downward trend.

——————–

The other major issue that impinges on this outcome, is the authors being based at Thomson Reuters, a large publishing conglomerate. The paper contains no conflict-of-interest statement, but I do find it rather “convenient” that a group of people paid by the publishing industry found a favorable trend in the speed at which the industry deals with problems as they arise. In my own experience (N=1 anecdote), the delay for publishers to deal with problem papers is either static or has risen recently due to increased workload of this kind.